Micro service architectures where each service is maintained by a single team present some challenges to testing; challenges that are partly outside the realm of developer testing, but that can be tackled with developer testing techniques. The classic problem is this: The system made up of services A, B, and C, is for practical purposes observed and controlled by the UI. In addition, service B depends on service C. Now, how do you deploy and test that system with confidence? And who does it?

It’s not unheard of that the great honour is bestowed upon testers, who may and may not employ test automation to avoid the tediousness. And while the testers may or may not have a deep enough knowledge to test the entirety of the system during while preparing the deployment, they usually neither have the skills, time, or permissions to perform the actual deployment. Somebody else does. This setup leads to frustration, long testing and development cycles, and bugs slipping through the cracks.

It’s hard to provide a silver bullet solution to a problem of this complexity, but here’s an attempt to address the majority of common setups.

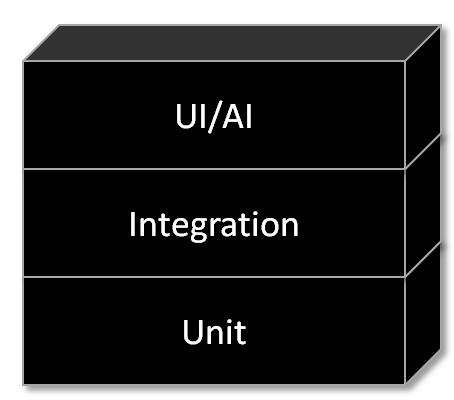

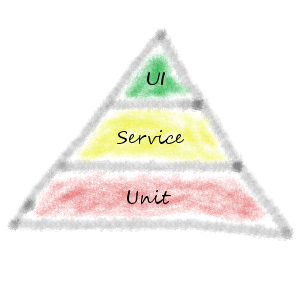

First, note the similarity between the hierarchy of the services and the test pyramid.

To utilize the analogy, let’s remind ourselves that unit tests constitute the bulk of (developer) tests. This is analogous to having the feature teams employ enough testing to ensure that their services work in isolation and means that each team is responsible for writing unit tests, integration tests, and any other tests needed to ensure that their service is working and can be modified. This includes managing the test data for their services. In the figure above, team B also needs to write tests that ensure that the integration with service C works. When all teams have achieved full coverage of their individual services, we can view this as full “unit test” coverage.

Next, the services need to be integrated with the UI. Before looking at pixels on the screen, we must acknowledge that the user interface seems to be some kind of orchestrator, which means that integration tests are needed for whatever its orchestrating. Such tests should be maintained by whatever team that deploys the system. After all, in this setup, it’s their job to make sure that the deployed system works as a whole. One might argue that it’s cumbersome/unfair/impractical to have that team own the tests, but keep in mind that feature teams A-C have done their homework by ensuring that their services and integrations have been tested and work as expected. Plainly speaking, the team that performs the integration needs a way to ensure that it works, and automated tests are the tool, just like in the lower layers.

As soon as the team starts developing tests that cover the entire system, they realize that they need to know all ins and outs of the individual services to come up with good test data, or do they? As a matter of fact, they don’t. It would be unreasonable to have a single team own the test data generation for an entire service-based system. It’s probably just as unreasonable to expect that such a team would know all business rules. To solve this, teams A through C should publish APIs for test data generation; test data that’s correct for, and relevant to, a single service. The exact specifics are context- dependent, but the goal is to simplify for the caller of each service.

So, for example, let’s say service B is the dull customer service which is prevalent throughout most of the average systems. Its team would publish a library or API endpoint tailored to creating one or multiple customers with reasonable default values, which would mean that the caller would be alleviated of the burden of knowing the specifics of postal addresses, username generation, or some context-specific flags that are typically attached to each customer. If all teams do this, the job of the integrating team is much easier. In a world of good engineering, creating these test data APIs should be very inexpensive, because the teams need to generate the same kind of data for their own tests anyway.

Given that the “integration” layer has been solved, the pixels on the screen part becomes relatively simple. The integrating team can crank out some end-to-end tests that run through the UI. Since the test data problem has been solved, it should be relatively easy. Manual testing should also be quite straight-forward at this point.

To sum this up, there are two key points:

- There is a team that’s responsible for deploying the system and ensuring that its components work together to form a whole. This might sound very reasonable but may require merging a testing team with an ops-heavy team and possibly adding a developer or two.

- Feature teams publish APIs or libraries that populate the test versions of their services with good data. Not rocket science, but not standard procedure in the industry either.